The Long Hesitation Is Over

For years, major Hollywood studios refused to sue AI companies. The reasons were pragmatic: litigation is expensive, copyright law for AI was unsettled, and — above all — studios did not want to permanently close the door on potential AI partnerships before those relationships had even begun. Even when the Writers Guild of America sent formal letters urging studios to take legal action over the use of Hollywood screenplays in AI training, the majors held back.

That restraint ended in the second half of 2025. Disney and Warner Bros. filed separate lawsuits against Midjourney, the AI image-generation service — and the legal strategy they chose signals something bigger than a one-off dispute.

"The target is not what the AI learned — it's what the AI shows you."

Output, Not Input: A Deliberate Legal Strategy

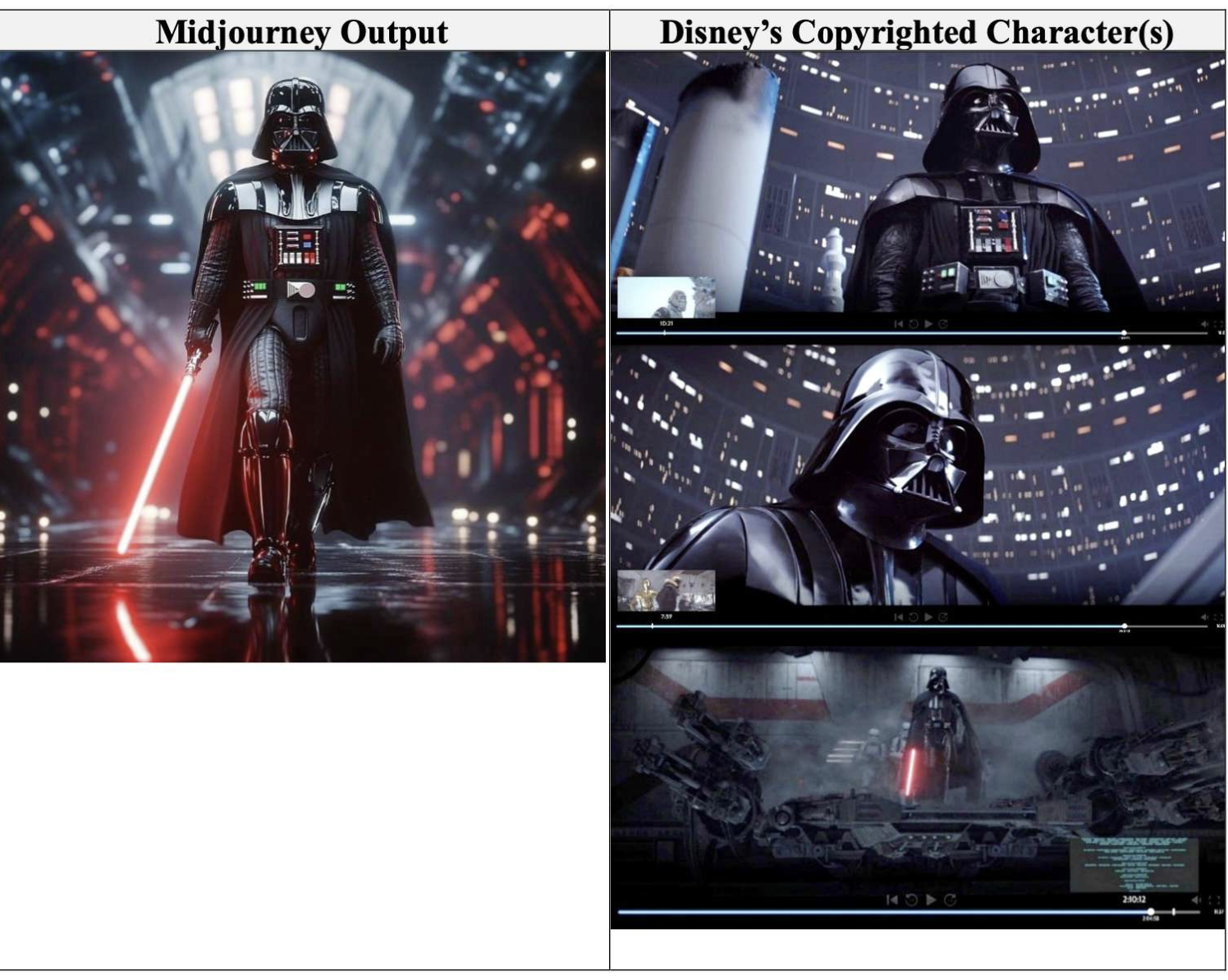

What sets these Hollywood lawsuits apart from the music-industry copyright battles is where the legal crosshairs are aimed. Music-rights cases have focused largely on training data — whether AI models were built on copyrighted songs without licenses. The studios have chosen a different battlefield.

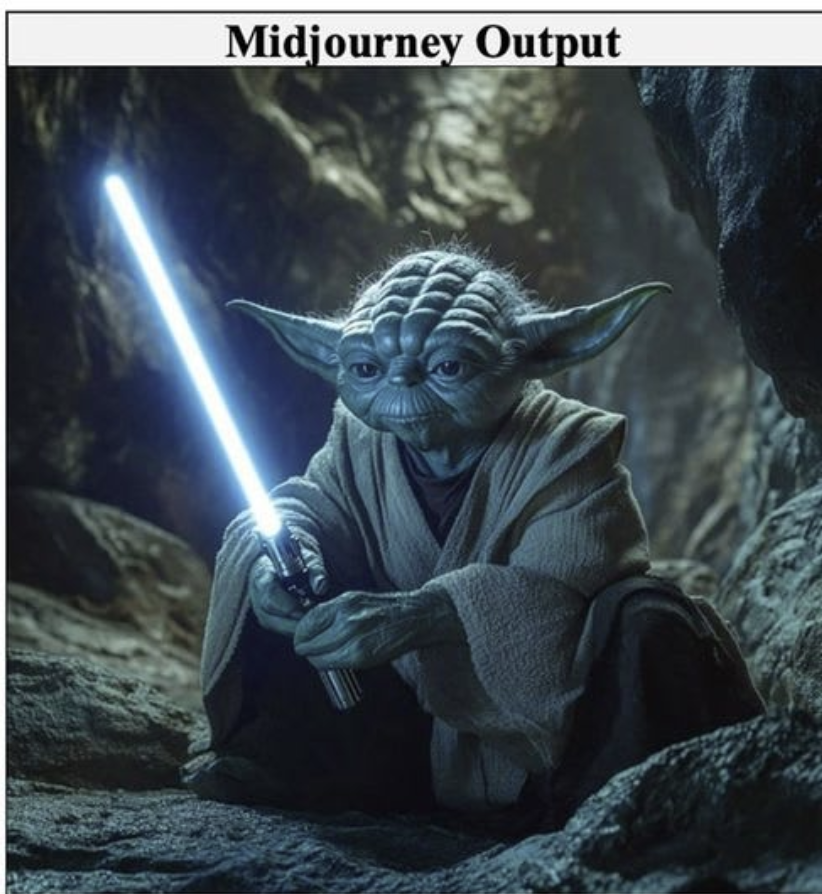

Disney and Warner Bros. are arguing that Midjourney's AI output reproduces recognizable, proprietary characters — Mickey Mouse, Batman — with enough fidelity and frequency that ordinary users can identify them, even when prompted with fairly generic instructions. The complaint is not that the model was trained on these works; it is that the model keeps generating them.

This distinction is legally deliberate. It points toward an emerging doctrine: training data usage may receive some degree of fair-use latitude, but if a commercial AI service consistently reproduces protected character expressions in its output, liability can attach regardless of what the model was taught. Legal analysts expect this to become the dominant framework for AI copyright litigation going forward.

Even if both cases settle before a final ruling — the more likely outcome — the studios will have permanently changed the industry's calculus. Every AI company now knows that character reproduction in output is a litigation trigger, not a gray zone.

KEY EVENTS TIMELINE

The Seedance Moment: When Deepfakes Went Viral

If the Midjourney lawsuits represent the institutional front of this battle, ByteDance's Seedance 2.0 deepfake video illustrates its human stakes. A 15-second clip — generated by Seedance, showing Tom Cruise and Brad Pitt in an apparent rooftop brawl — spread across global social media platforms within hours. The Motion Picture Association and every major Hollywood studio condemned it as a simultaneous violation of copyright and actors' rights of likeness. ByteDance's global commercial launch plans for Seedance were derailed almost immediately.

The video did what no legal brief could: it made the abstract threat visceral and visual. For studio executives, talent unions, and policymakers alike, it demonstrated precisely what unrestricted AI video generation can do to real human identities — without their consent, without compensation, and at mass scale.

Seedance 2.0 showed the world what the studios had been quietly fearing for three years.

The Disney–OpenAI Divorce

Disney's exit from its reported $1 billion investment-and-licensing arrangement with OpenAI is equally revealing. On the surface, the split coincided with OpenAI's decision to wind down Sora, its video generation service. But insiders describe a deeper internal reckoning: Disney's executives and creative talent had grown increasingly uncomfortable with how AI video tools might handle the company's character IP, and what the proliferation of AI-generated content would mean for the labor market in film and television production.

Framed this way, the breakup is not a defeat for either party — it is a clarification. Disney is signaling that any AI partnership must come with explicit, auditable guardrails on character reproduction and with structures that protect its creative workforce. That is a much higher bar than most AI companies have been willing to accept to date.

What the New Rules Will Look Like

The legal and commercial logic now points in a clear direction. Once a court — or a widely-observed settlement — draws a line around AI output that reproduces protected characters, the market will restructure around that line. AI companies that stay inside it, by negotiating formal IP licenses with rights holders, will be able to operate freely. Those that cross it will face immediate litigation risk.

This actually increases the potential value of Disney-style IP licensing and investment packages. A premium tier of officially licensed AI reproduction rights — available only to vetted partners — becomes a scarce and valuable asset. The Disney–OpenAI model, though it collapsed this time, is likely to resurface in more carefully structured form.

For AI companies, the implication is equally clear: designing an 'IP safety layer' — technical and contractual systems that prevent unauthorized character reproduction in output — will become a baseline competitive requirement, not a nice-to-have.

What This Means for K-Content and Korean AI Companies

These developments carry direct and urgent implications for the Korean entertainment and technology ecosystem.

THREE ACTION ITEMS FOR KOREAN PLAYERS

Once the boundary is drawn, the rules of the game become much clearer — and the players who prepared for it win.

The Bottom Line

The lawsuits filed by Disney and Warner Bros. against Midjourney are not routine commercial disputes. They are the opening argument in what will become the foundational legal framework governing how generative AI can interact with existing intellectual property. The Seedance deepfake scandal and Disney's withdrawal from its OpenAI partnership are complementary signals that the industry's patience with unregulated AI character reproduction has run out.

The boundary that emerges from these cases — in court rulings or in settlements that the entire industry watches — will function as a guide rail for every AI-content partnership deal that follows. Companies and countries that build their AI strategies around that boundary now, rather than waiting for the verdicts, will be best positioned to operate in the world that comes after it.

© 2026 K-EnterTech Hub · kentertechhub.com · All rights reserved