Curious Refuge built a 172-country, 95%-professional student base in three years. NYU Tisch's expanded Runway deal and USC's new "AI Institute for Actors" arrived a week apart. The talent value chain itself is being rewired — yet 51% of U.S. viewers still reject AI actors.

No campus, no soundstage, no 30-year-old editing suite. Yet in three years Curious Refuge has signed up several thousand students across 172 countries, with 95% already working in film or advertising. All instruction is online.

The graduation requirement is a short film made primarily with AI. The school's homepage claim — "the world's first home for AI storytellers" — is backed by a Cannes Film Festival quote calling it "the world's most recognized AI for filmmakers training platform" (curiousrefuge.com).

Once that new model proved the demand, America's elite incumbent film schools moved. In the space of one week in April, two announcements landed back-to-back.

On April 10, The Hollywood Reporter (THR) reported that NYU's Tisch School of the Arts had expanded its collaboration with the generative-video AI company Runway, opening up free credits and the company's full suite of tools across three programs.

Six days later, on April 16, USC's School of Dramatic Arts launched the Institute for Actor-Driven Innovation (USC-IAI), with Adobe as its sponsor. Further out, CalArts has taken funding from the Chanel Culture Fund for AI research and creation; the Sundance Institute received a $2 million grant from Google.org to launch an AI Literacy Alliance (THR 4/10, 4/16; The Ankler 5/5).

These moves signal that the talent value chain in the screen industries has entered a phase of being rewired, with Big Tech capital as the axis. The old single-direction supply chain — school to talent to studio — is being reshaped into a circular structure: Big Tech to school to talent to studio and Big Tech. The school is no longer a neutral feeder of labor but the on-ramp into a tool lock-in.

Several forces are pushing the change at once. Studios have learned, on actual projects, that AI compresses cost and timeline in capital-intensive stages such as trailer mockups, pre-visualization, and visual-effects (VFX) backgrounds. The refresh cycle on AI video tools has shrunk to roughly a quarter, turning curation — picking which tool to use, when, and how — into a discrete new specialization.

The Writers Guild of America (WGA) and the Screen Actors Guild–American Federation of Television and Radio Artists (SAG-AFTRA) have used collective bargaining to narrow how AI can be used on the job; the classroom, sitting outside the labor market the contracts cover, has become a de facto workaround. And from a Big Tech market-entry standpoint, locking in the workflow of the next generation of showrunners and studio executives at the schoolroom level is the most efficient lifetime-value (LTV) play available.

The Korean content industry is already inside this ecosystem. Open Curious Refuge's online "AI Film Gallery," and the most prominent slot on the front page is occupied by an AI-generated commercial for NCSoft's mobile MMORPG "Lineage M" (Kent Castle) — a Korean game's global ad sitting at the center of an American AI school's worldwide showcase.

■ The new model's starting point — Curious Refuge's business structure

Curious Refuge, the starting point of this wave, is structurally different from a traditional film school. Founded in 2023 by Shelby Ward and Caleb Ward, the school skips the campus and physical infrastructure and treats the curation of quarterly-refreshed AI video tools and workflows as its core value proposition.

Seven core courses are offered: AI Filmmaking, Advanced AI Filmmaking, AI Advertising, AI Documentary, AI Animation 2.0, AI VFX and AI Screenwriting. Tuition runs $749 per course, with $200–$500 in additional tool fees to produce a single short. The school has signaled on its site a coming move to a subscription membership bundling the full course library with one-on-one feedback.

The published partner roster is essentially the school's sales sheet. AMC, Porsche, Meta, Apple, TikTok, Google, Microsoft, Volkswagen and the British VFX house DNEG appear on the official site. VFX artist Michael Eng ("Black Panther: Wakanda Forever") told Reuters that paid project requests began arriving as soon as he completed the program.

The business architecture becomes clearer through the parent company, Promise. Promise's investor base includes Google, Peter Chernin's North Road, and Michael Ovitz's Crossbeam — Big Tech and Hollywood capital in a single line. Promise co-founder and president Jamie Byrne, a former YouTube executive, told THR: "We knew the competition for the best generative AI artists was going to become fierce, so we needed a system that always knows who the best up-and-coming talent is."

Promise and its school Curious Refuge sit inside the same capital structure, executing scouting, training and recruiting funnel functions on a single platform — a vertically integrated model that bundles education, agency-style talent representation, and studio production. It is a fundamentally different shape than the traditional film school that bought cameras and editing software from outside vendors.

■ Incumbents respond — NYU Tisch and USC change shape

Once the new model had proved itself, America's two leading film schools responded inside one calendar month. The two responses look different on the surface, but they share the same Big Tech capital flowing in.

The NYU Tisch–Runway deal, announced April 10, is an infrastructure-style transaction that makes "tool access" effectively free for students.

It covers the Interactive Telecommunications Program (ITP), Interactive Media Arts (IMA) and the NYU-wide Hyper Cinema Lab housed at Tisch — all three programs that sit at the intersection of technology and creative practice. Students will receive access to Runway's full suite of AI tools for required coursework and personal projects (Runway, 4/10). The collaboration was framed by both sides as an expansion of an existing relationship rather than a brand-new contract.

Tisch dean Rubén Polendo, recipient of a 2025 Guggenheim Fellowship for AI and Performance, framed the move in terms of institutional continuity: "AI is having a transformative impact on the creative fields that Tisch students are preparing to become leaders in. For 60 years, our school has emphasized rigorous inquiry and creative innovation to shape the next generation of artists, and we are excited to continue that tradition with Runway." THR noted, however, that the mainline film school — the program that produced Spike Lee, Todd Solondz and Kasi Lemmons — "is not part of the agreement at this time." The line between the AI-forward technology programs and the traditional film school inside the same building has been deliberately drawn.

Runway co-founder and CEO Cristóbal Valenzuela's statement carries an under-the-surface signal. "NYU Tisch has always been a place where the next generation of creative leaders are trained. ITP was essential education for me and my co-founders — it's where Runway was born." Runway's founding came out of NYU's ITP.

The company is now circling back to the program that trained its founders, this time as a tool supplier. In a separate THR interview Valenzuela expanded the framing: "Twenty years ago when you went to film school or art school, the thing they gave you was a camera and maybe an Adobe subscription. Now they're giving you access to Runway — which lets you do pretty much anything you want. For newer generations, this is the new normal." He added that resistance inside studios, schools and companies "is a very small minority — not in any way shaping the discourse anymore."

USC-IAI, launched six days later, is the mirror-image model. Where NYU widens tool access, USC focuses on "helping actors who were being displaced by AI redefine their professional identity around using AI instead." Adobe is the institute's sponsor (THR 4/16).

USC School of Dramatic Arts dean Emily Roxworthy laid out use cases including scene readings with a resurrected Laurence Olivier, advisory help with launching a production company, and an "AI agent" for unsigned students — a system that automatically scans casting breakdowns (sheets that describe roles being cast) and matches relevant audition opportunities. A joint course with USC Law School on managing one's likeness is under consideration. Day-to-day operations are run by Tomm Polos, chair of USC's "creator arts" program.

On the surface the two approaches sit at opposite ends. NYU is putting the tools in students' hands as widely as possible. USC is teaching students how to interpret and manage the rights and identity shifts those tools produce. Trace the capital and the tool supply lines, however, and the two land on the same coordinate.

A video creator who learned Runway at Tisch and an actor who learned likeness management and AI-agent use at USC will both begin their careers inside the same Big Tech ecosystem. The on-ramps are diversified; the destination platform stack is the same. Roxworthy framed the institute's stance carefully — "we are not here to proselytize, we are here to make sure students understand" — but at the market level the two models converge.

Pull the lens back further and CalArts and Sundance fit on the same trajectory.

The Chanel Culture Fund underwrites AI research and creative experimentation at CalArts. Google.org's $2 million grant is funding Sundance's AI Literacy Alliance (The Ankler 5/5). A consistent pattern is visible across U.S. screen and arts education infrastructure: similar capital types entering through different doors at the same time, using education and incubation infrastructure as the front-end on-ramp into Big Tech tool ecosystems.

■ The structural shift — comparing five axes of the talent value chain

The point of this transition is not the arrival of a new tool. It is that the path through which the tool reaches the schoolroom and the industry is simultaneously redrawing the value chain's capital flow, talent pipeline, and standard-setting authority. Five axes show the shape of the change.

[Screen-industry talent value chain — before vs. now]

All five axes have moved together — that simultaneity is the defining feature of this transition. Cameras and editing software have always been built by outside companies, but in that earlier era schools were buyers, and decisions about how tools were used were divided between academic departments and working sets.

Now the tool supplier is delivering tools, capital, internships and hiring channels to the school as a single bundle. The line between who teaches a tool and who built it is dissolving. The Ankler's Erik Barmack calls the result "closer to onboarding than education." Where the old model exposed students to multiple tools and left labor-market choices to be negotiated later, the new model effectively enrolls the student into a specific tool ecosystem from day one.

The erosion of union leverage is the hardest-to-reverse consequence. Collective bargaining works inside the labor market. The classroom sits outside it. If the tool ecosystem in a graduate's hands is already a Big Tech ecosystem at the moment they join a union, anything the contract does to define "scope of use" amounts to after-the-fact correction. The deeper that classroom-stage lock-in becomes, the smaller the zone where unions can intervene, and the more bargaining gravity tilts toward Big Tech and the educational institutions taking its money.

■ The "two-prompt" myth — why curation became the new barrier to entry

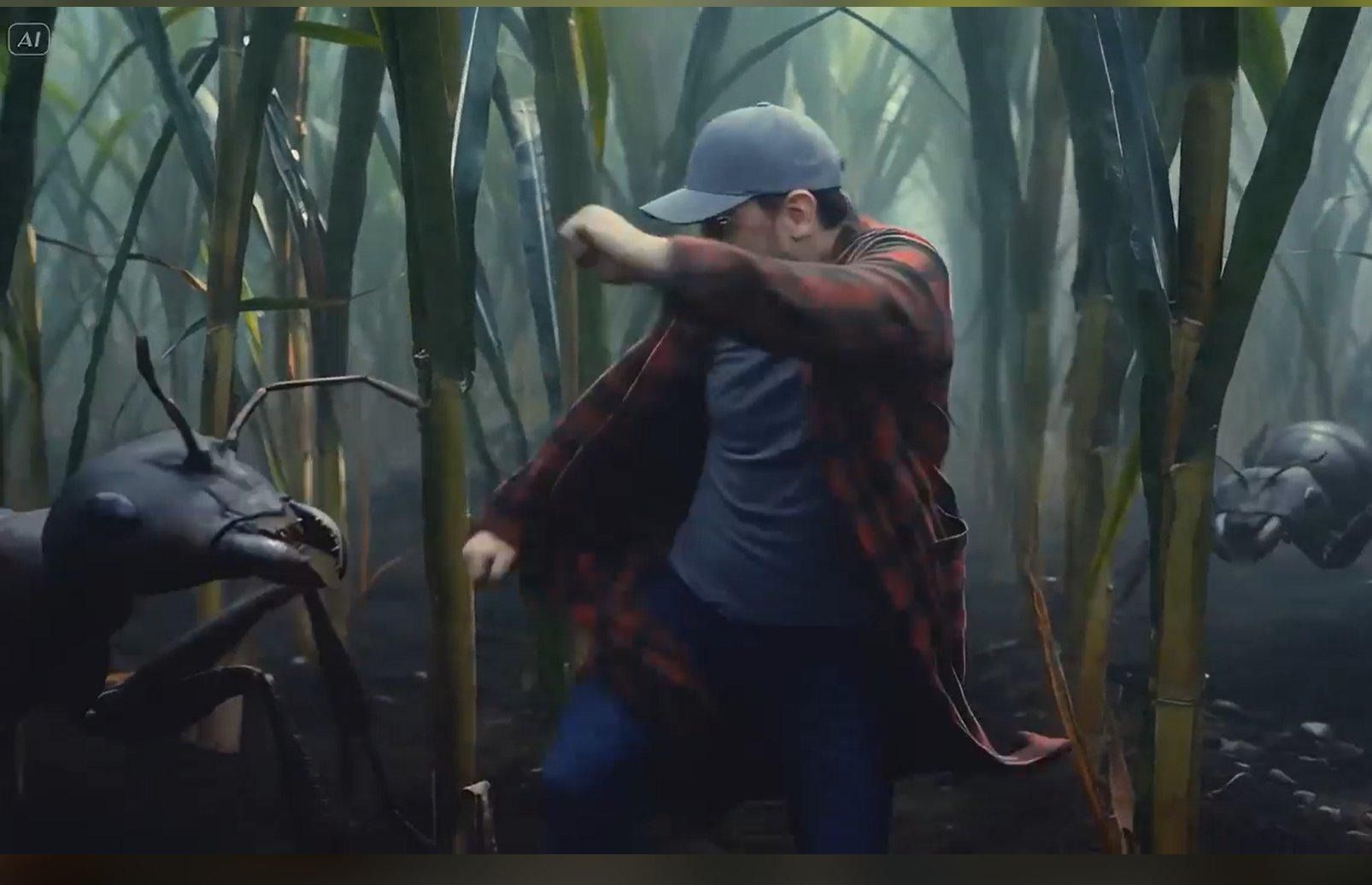

A frame from an AI-generated short film. The scene is composed using generative tools rather than on-set photography. (Source: Curious Refuge AI Film Gallery)

If "a feature film from two prompts" were literally true, the market value of an AI film school would converge to zero. Anyone could pick up the tools and start producing finished work. The reason schools and private programs continue to charge $749 per course and fill seats is that, in practice, the learning curve is steeper than the headlines suggest, and curating that curve has become a new entry barrier in itself.

Producing a credible short demands more than typing "make this look like a horror movie." A creator has to choose between, say, a 1980s 35mm Panavision look and the texture of a Sony FX3 digital cinema camera. Color grading, lens distortion, grain, sound effects and score open up dozens more decisions. A search for a single werewolf howl effect returns hundreds of royalty-free files, and selecting one that fits the scene's mood, licensing terms and format is itself a step. The tools change every quarter — interfaces shift, prompt grammars get adjusted, resolution and frame-rate and physics-simulation options come and go.

THR likened an AI film course to "a degree in English where every few months the rules of grammar change." Curious Refuge CEO Caleb Ward puts it more bluntly: "You still have to understand the fundamentals of storytelling. The people in our community making the best AI films are all working professionals." Generative tools accelerate execution; structure, mise-en-scène and rhythm still depend on human experience.

This barrier works in two directions. For schools and educational vendors it creates real market value — students reach professional output faster by paying for a curated workflow than by trying to assemble one from scattered tutorials.

For Big Tech it creates a channel that turns one company's tools into the de facto industry standard. The moment tool curation becomes the school's business model, the school has a structural incentive to accept scholarships, research grants, and free credits from tool vendors, and to favor specific ecosystems at the curriculum-design stage. The myth of "two prompts" hides a real gap — between people who know which tool to use, when, and through what tool chain, and people who don't. That gap is the school's revenue source. It is also the hidden gateway into a Big Tech tool ecosystem.

■ Supply-demand fracture — 51% of U.S. viewers reject AI actors

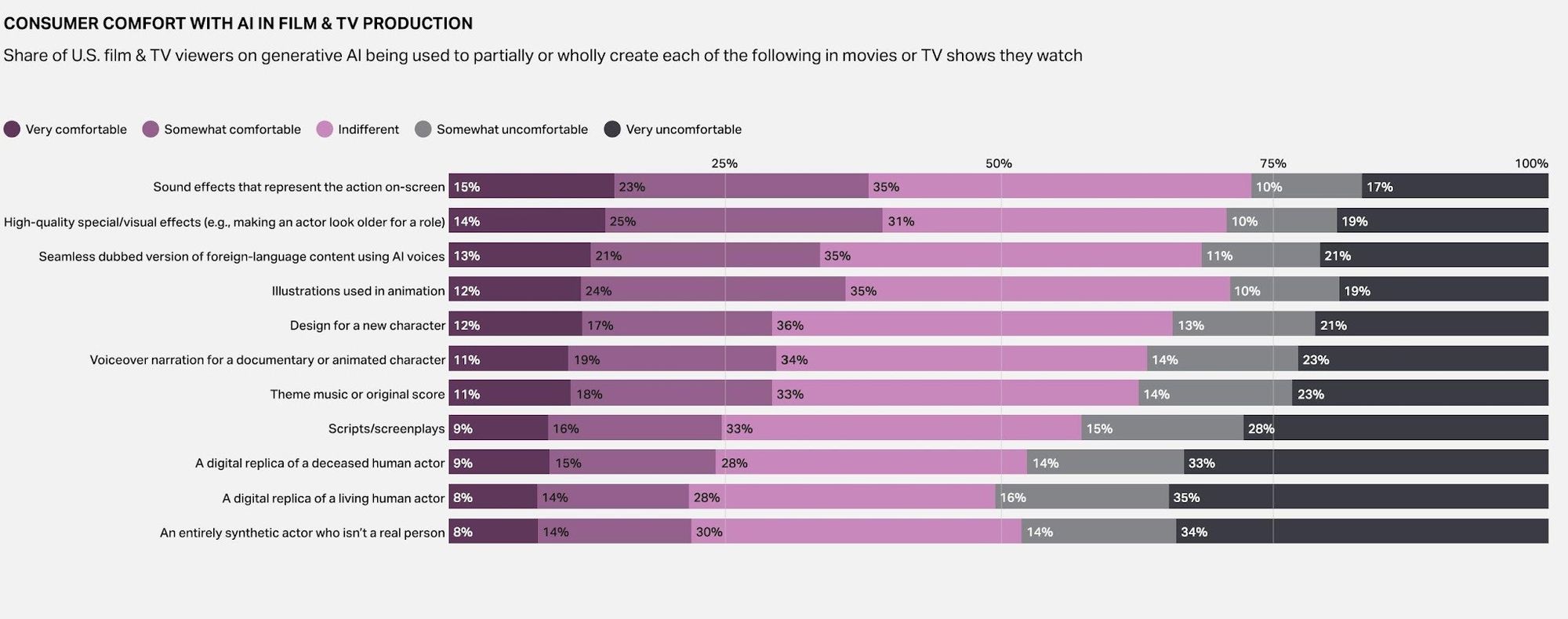

As fast as the education market is moving, the end consumer — viewers — is not moving in lockstep. Acceptance varies sharply by use case. Where AI handles technical or post-production work, audiences are roughly tolerant. The moment AI replaces a human on screen, rejection clears 50%.

U.S. viewers' comfort with generative AI in film and TV — comfortable for sound effects and VFX, hostile to digital replicas of living actors. (Source: U.S. film & TV viewer survey)

U.S. VIEWERS' COMFORT WITH AI IN FILM & TV — BY USE

Note: 'Comfortable' = 'very' + 'somewhat' combined. 'Uncomfortable' = 'very' + 'somewhat' combined. Rows that don't sum to 100% reflect distribution differences within categories.

On-screen action sound effects, high-quality VFX and AI-voiced dubbing all sit in roughly the same band — comfort in the high 30s, indifference in the mid-30s, rejection only in the high 20s. Audiences are willing to treat AI as a competent technical assistant. The shape inverts when AI moves to the front of creation. Voiceover, score, and screenplay land in the high-30s to low-40s for rejection. Digital replicas of living actors clear 51%; entirely synthetic actors clear 48%; digital replicas of the deceased clear 47%. The market signal is consistent: viewers do not want AI sitting in a person's chair.

This 51% wall is the structural fracture between the classroom and the market. Inside the classroom, students experimenting with synthetic-actor and digital-double tools are imagining the next Tilly Norwood — last fall's headline-making AI synthetic actor. The market they enter on graduation day looks more conservative than the one their syllabus assumes. USC's framing of its institute around "how an actor manages AI" rather than "how to use AI tools" reads as a strategic response to that wall. The supply-demand speed gap will likely become one of the screen-content market's main friction points over the next five years. The classroom is producing AI-fluent talent at an accelerating rate; viewer acceptance of human substitution is flat or retreating. If the gap is not closed, the AI narrative gets re-priced not by adoption velocity but by what audiences are emotionally and ethically willing to accept.

■ Regulatory domino — the Academy's new "human-authored" rule

The Academy of Motion Picture Arts and Sciences will overhaul its Oscar eligibility rules next year, introducing the first formal screen-industry guideline aimed squarely at generative AI. Screenplays must be "human-authored" to qualify for nomination; acting categories require performances "demonstrably performed by humans with their consent." AI-written scripts and AI-generated or composited performers are effectively pulled out of the awards race; AI use in post and effects remains permissible without specific sanction.

This is a step beyond the prior "Don't Ask, Don't Tell" posture and is the first official signal that the AI film ecosystem is being routed onto a separate track from the awards-track mainstream. The Academy keeps in place its standing principle that the use of generative AI or digital tools "will neither help nor harm a film's chances of nomination," while reserving the right to ask producers for additional information about AI use and the human creative contribution. Not a blanket AI ban — a reaffirmation that human authorship and performance are at the center of evaluation (The Ankler 5/5).

Two market consequences follow. First, similar or identical principles are likely to spread to Cannes, Venice, Berlin and the submission rules of the major global OTT platforms; with several festivals and bodies already moving on "human authored" or human-contribution disclosure, the Academy's standard becomes a de facto reference for the red-carpet tier. Second, once eligibility splits between AI-tool-trained students and the mainstream awards track, the structural complaint from people who have taken on six-figure tuition debt to learn workflows that Hollywood is institutionally trying to slow down stops being rhetorical. "Paying to learn what the industry is trying to delay" turns into a description of the deal.

The fact that studios' strategic center of gravity is hybrid production — real cast and crew shooting on a soundstage with backgrounds and effects filled in by generative AI — rather than "AI-only filmmaking" matters here. Industry observers describe the on-set work as recognizable; the post pipeline is where AI has gone deep. Promise's Jamie Byrne told THR: "You can do it at much more efficient cost and on a much faster timeline. People don't realize this is already happening — because it fits nicely into the existing ecosystem." If that hybrid model hardens, the most direct shock is to VFX, art, and sound — the post-production crafts.

Labor-market projections are not encouraging. The Ankler reports a survey of about 300 entertainment industry leaders in which roughly three-quarters said AI will support job elimination or consolidation, with around 200,000 positions falling within direct or indirect impact. The Academy's "human-authored" standard, framed as a protection for human creators, also reads as a signal that accelerates role redistribution and restructuring around hybrid AI–human production. Regulation, technology, awards, education, studios and unions are now pushing on each other in series. This is the early stage of a regulatory cascade.

These moves are not isolated. They sit at the end of a chain of artist-rights campaigns running across music, film and TV that have argued, since 2024, that unconsented AI training is a rights violation. Hundreds of artists — Billie Eilish, Kate Bush and Scarlett Johansson among them — have signed open letters and joined campaigns demanding AI training restrictions. The Academy's human-authored rule reads like the first instance in which that campaign energy gets coded into film-industry rules of the road.

■ Korean content industry coordinates — tool, education, regulation

Korea is not outside this current. NCSoft's Lineage M sitting on Curious Refuge's gallery front page is the proof point. A Korean operator made a global marketing video using American Big Tech AI tools, and the result became part of an American AI film school's worldwide showcase. As K-dramas, K-content, FAST channels and U.S. ATSC 3.0 broadcasting all push toward broader global distribution, the same dependency is positioned to root deeper into the entire value chain. Left alone, this becomes "subcontractor-style globalization" — production efficiency captured, while technology, standards and norms are outsourced to non-domestic operators.

The industry response has to move on three axes simultaneously: tools, education, regulation.

On the tool axis, strategic alliances between domestic AI video startups and the broadcasters, platforms and production companies need to move quickly. Sustained dependency on Adobe, Google and Runway will, over time, erode pricing leverage and data-negotiation leverage in global deals. At the same time, hybrid-production guidelines that assume AI assistance need to be drafted inside a social-consensus framework that includes unions, writers and performers. A standing forum bringing together the Korea Broadcasting Performers' Rights Association (KBPRA), the Korean Screenwriters Association, SLL, Studio Dragon and other principal stakeholders should pre-coordinate scope of AI use, credit attribution and compensation structure.

On the education axis, the role of Korean film and screen training institutions is decisive. The Korean Academy of Film Arts (KAFA), Korea National University of Arts School of Film, TV & Multimedia, and the screen-related programs at Dankook, Chungang, Dongguk and Hanyang Universities should not simply import the U.S. curriculum. Critical AI literacy — teaching not only how to use the tools but how to question them — needs to enter the regular curriculum. The USC-IAI model also points to a parallel task.

Performer training institutions such as Korea Broadcasting Arts Education Promotion Institute and Dong-Ah Institute of Media and Arts should pilot practical tracks covering likeness rights and rights of publicity, contract architecture for working with AI scene partners, and bargaining over digital doubles. That is the minimum safeguard for ensuring that new actors and crew enter the workforce already attuned to rights questions in the AI era.

On the regulatory axis, speed of design is the variable. The Korea Copyright Commission, the Korea Media Rating Board, and the broadcasting and telecommunications regulators should work in tandem with the in-progress draft of the Audiovisual Media Services Act to put basic ex-ante guardrails in place: source-and-legality disclosure for AI training data, prior consent for digital-double and synthetic-voice use, and AI-generation labeling.

If the Academy's "human-authored" standard spreads as a global submission norm, K-content lacking equivalent compliance will face eligibility disputes at international festivals, awards and platform-screening checkpoints. The Tilly Norwood case — in which Scottish actor Briony Monroe alleged her face and mannerisms were modeled without consent — is no longer a foreign concern for Korean performers. The case has the potential to become the trigger for revisions in standard contracts and rights frameworks.

■ Implication — one school's start, the whole chain's redesign

A new educational model born outside the campus system has, in three years, moved both of America's leading film schools. The starting point was Curious Refuge — an AI filmmaking program.

The destination is the entire screen-industry talent value chain being redesigned. With tool generations turning over on a quarterly clock, the decision-making cycles inside schools, studios, unions and regulators have to run faster than they used to. If they cannot, the standard-setting authority over technology, production norms and data governance defaults to the foreign Big Tech players that own the tools. Whether the Korean media and content industry can reset that clock at its own pace is now a core variable for K-content's global competitive position over the next five years.

SOURCES:

• Runway, “Runway Expands Collaboration with NYU Tisch School of the Arts,” April 10, 2026.

https://runwayml.com/news/runway-expands-collaboration-with-nyu-tisch-school-of-the-arts

• Steven Zeitchik, “USC Has Just Launched an AI ‘Institute’ for Actors,” The Hollywood Reporter, April 16, 2026.

• Steven Zeitchik, “Some NYU Film Students Will Now Be Given Tools to Make Movies With AI (Exclusive),” The Hollywood Reporter, April 10, 2026.

https://www.hollywoodreporter.com/movies/movie-news/nyu-film-school-runway-ai-tools-spike-lee-1236560902/

• James Hibberd, “As Hollywood Panics Over AI Job Losses, a Startup Says It Has the Answer,” The Hollywood Reporter, March 25, 2026.

https://www.hollywoodreporter.com/movies/movie-features/curious-refuge-ai-film-school-hollywood-1236546505/

• Erik Barmack, “USC to NYU: AI's Stealth Film School Takeover Has Begun,” The Ankler, May 5, 2026.

https://theankler.com/usc-to-nyu-ais-stealth-film-school-takeover-has-begun/

• School materials: https://curiousrefuge.com (courses, membership, AI Film Gallery)

• Viewer-perception data: U.S. film & TV viewer survey on consumer comfort with AI in film & TV production.

Written by K-EnterTech Hub Industry Analysis Desk · MediaGPT Column.