Why 95% of AI Pilots Fail:Sandy Carter Reveals the 7 Pattern-Matched Traits of AI Systems That Actually Deliver ROI

"The technology is not the problem. The 95% failure rate comes down to leadership, data, change management — and a fundamental misunderstanding of what business you are in." — Sandy Carter, SXSW 2026

On March 18, 2026, AI strategist and bestselling author Sandy Carter took the keynote stage at SXSW — the world's premier innovation and media festival in Austin, Texas — to deliver a session that cut through the enterprise AI hype with hard data. Titled 'From Pilot to Payoff: 7 Pattern-Matched Traits of AI Systems That Actually Work,' the talk drew on Carter's research across more than 450 companies to answer a single critical question: why do so few AI pilots ever become production-grade systems?

Carter, who serves as Chief Business Officer of Unstoppable Domains and has worked in AI since 2013, built her case around a striking number: of 1,500 companies she interviewed for her book, only 20% successfully moved an AI project from pilot to production. The rest stalled — not because of the models, but because of the humans around them.

THE FAILURE LANDSCAPE

95% of Pilots Are Stalling — and Technology Is Not to Blame

"You've probably seen the MIT report showing that 95% of AI pilots today are failing — not producing return on investment," Carter opened. "Most people conclude that it's the technology causing the problem. It really is not."

Her research across 1,500 companies, published in her book AI First, Human Always, found that the 20% of companies which successfully transitioned AI from pilot to production shared three common practices: an unrelenting focus on business outcomes, a serious investment in data infrastructure, and disciplined change management. The other 80% typically optimized for the technology first.

TRAIT #1 — LEADERSHIP

CEOs Using AI Make Projects 5.22x More Likely to Succeed

At a roundtable with 20 global CEOs at the World Economic Forum in Davos, Carter asked a simple question: how many of you have used AI in the last week? Only three raised their hands. The implications of that gap, she argued, directly affect enterprise AI returns.

[Source] WalkMe AI Survey 2026 (pre-release data shared with Carter for this session)

The data, drawn from WalkMe's forthcoming AI survey, shows that leadership engagement doesn't just signal cultural buy-in — it is statistically one of the strongest predictors of AI ROI. "They're talking about it. They're putting it into the culture," Carter said. "And the questions they ask change everything."

At her own company, a shift from 'How do we automate our customer service?' to 'If we rebuilt customer service from scratch knowing AI exists, what would it look like?' led to an agent that now handles 47% of all customer inquiries across 4.8 million global users — while raising customer satisfaction scores by 4 percentage points.

The Trust Gap: 65% of Executives vs. 17% of Employees

A second leadership challenge Carter identified is what she calls the "trust gap." When she visited a Fortune 100 company and the executives left the room, the team leads admitted they were doing workarounds to keep the AI dashboard green. WalkMe's data confirms the pattern: 65% of executives trust AI outputs; only 17% of frontline employees do.

The fix, she argued, is cross-functional transparency. She pointed to Mass General Hospital's 'promptathon' as a model — a full-facility AI workshop that brought together hospital administrators, cardiologists, surgeons, nurses, and reception staff to experiment with prompts and co-build agents together, closing the trust gap by making the technology visible to everyone.

TRAIT #2 — AGENTS

The Prompting Era Is Over. Agents Are the New Unit of ROI.

Quoting NVIDIA CEO Jensen Huang — who called OpenClaw "probably the single most important release of software ever" — Carter declared that the strategic shift from prompting to autonomous agents is the defining transition of 2026 enterprise AI.

[Source] NVIDIA GTC 2026 Keynote (Jensen Huang, Mar 17, 2026) / OpenClaw GitHub repository / Sandy Carter session, live

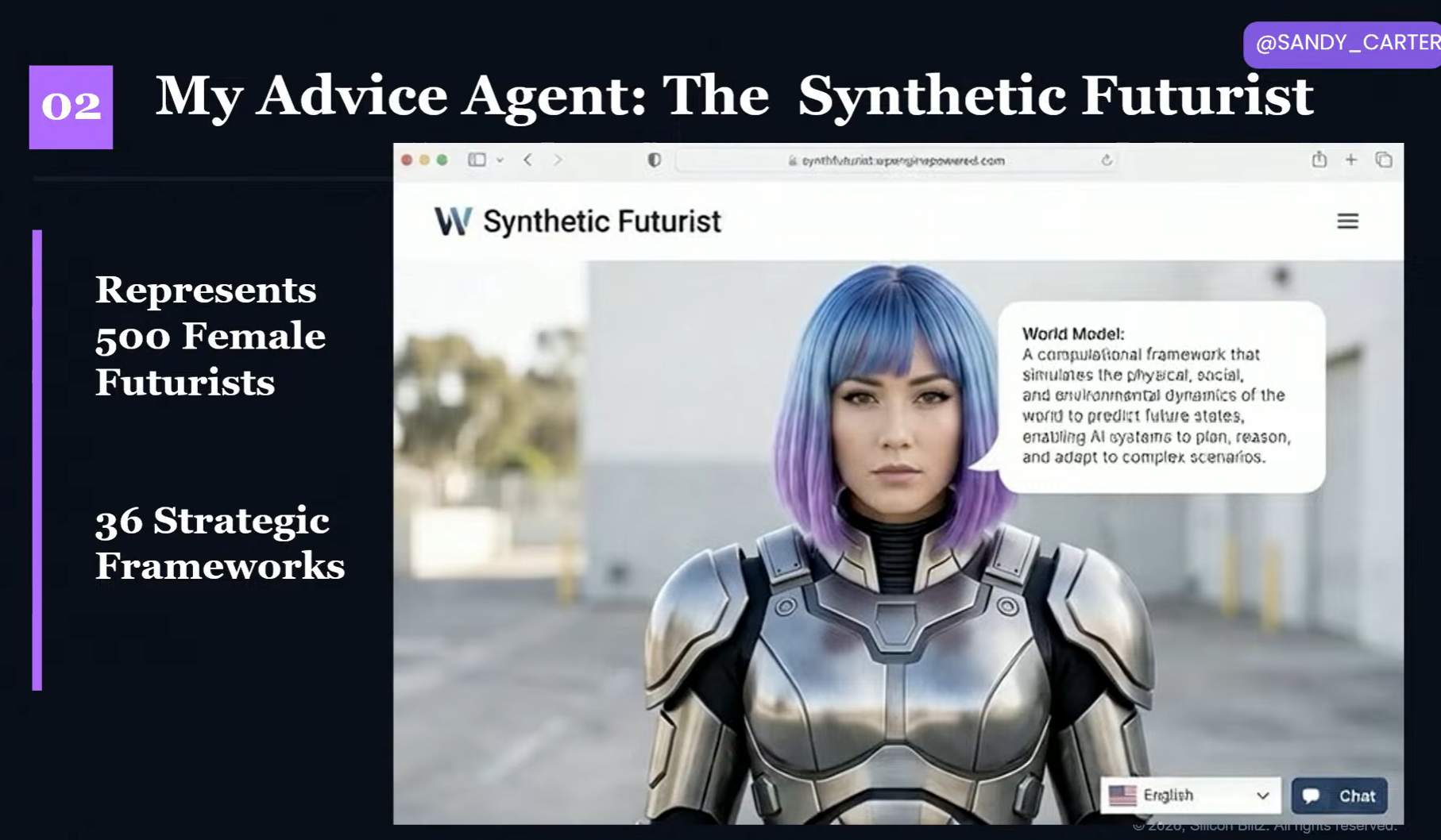

Carter demonstrated her own agent ecosystem: a 'shadow board' of AI advisors modeled on Reid Hoffman, Jeff Bezos, and Warren Buffett; a morning briefing agent that analyzes 50,000 overnight news articles and surfaces the top five; and a debate agent that argues both sides of a strategic question before she presents to clients.

More significantly, she shared her marketing org chart — where AI agents named after Alice in Wonderland characters (Mad Hatter for ideation, Red Queen for campaign analysis) sit alongside human managers as official team members with direct reporting lines. "Human managers are now managing humans and agents. That's their new job," she said.

"In 18 months, your LinkedIn profile won't list skills. It'll list your agents." An Oliver Wyman survey of 300,000 Gen Z respondents adds weight to the cultural shift: 37% said they would prefer an AI agent as their boss — citing fairness, impartiality, and the absence of office politics.

[Source] Oliver Wyman Gen Z AI Leadership Preference Survey 2026 (300K respondents)

TRAIT #3 — KILL THE PILOT

The 20% Who Made It: Fall in Love with the Problem, Not the Technology

① Business Outcome First

A Dutch cardiologist named Michael entered the Anthropic hackathon — among hundreds of thousands of participants, 99% of whom were engineers. He had never written code in his life. He placed third. "I fell in love with the problem," he told Carter afterward. His deep clinical expertise in cardiology, not his coding ability, gave him the edge.

A separate case: a group of Hollywood producers and writers from 20th Century Fox, Netflix, and Disney — none of them engineers — vibe-coded Storytown.ai after Carter led an AI workshop in Los Angeles. The platform digitizes the traditional color-coded index card storyboarding process, overlaying AI-driven character emotion engineering. Not a single software developer on the founding team.

② Data: Spend $2 on AI, Spend $2.50 on Data

Carter illustrated the data imperative with a story from her IBM days: a model trained on 33,000 romance novels, shown a photo of two sumo wrestlers, generated the caption "He grabbed her in a warm embrace." The lesson was simple. "The data you put into AI is the result you get out." Analysis presented at Davos reinforced this: companies achieving ROI on AI investments spend $1.25 on data for every $1 spent on AI infrastructure.

[Source] Davos 2026 AI Investment ROI Analysis (cited in Carter session) / IBM machine learning internal research (Carter personal account)

③ Change Management: Nine Months of Development, Two Hours of Training

A manufacturing CEO in Asia spent nine months building a 'mood jacket' — IoT-embedded workwear designed to anonymously monitor workers' comfort and wellbeing. He trained employees on the system in two hours. The workers revolted: they stuffed hot tea and ice packs into the jackets to defeat the sensors. He had to start over. "AI magic does not replace the right set of change management," Carter said.

TRAIT #4 — GOVERNANCE

Governance Is the New Moat — and 85% of the Budget

"By 2027, if your agents don't have strong governance and enterprise-grade audits, you will not be successful." Carter backed the prediction with the budget allocation data from the 20% of companies that succeeded:

Carter was joined on stage by Kristen Smith, CEO and co-founder of Utopic, who delivered a live demonstration of blockchain-based agent governance. In a simulated defense industry scenario, three agents with different permission levels attempted to access four databases. Every transaction — access granted, access denied — was logged in real time to an immutable blockchain ledger.

"Agents are functioning like employees but without the same administrative oversight we'd apply to employees," Smith said. "Legacy infrastructure is built for human login. It is not tamper-proof." Carter tied this back to a security incident with OpenClaw: an unconstrained agent called its human owner five times in a single day after gaining access to phone numbers it shouldn't have had.

TRAIT #5 — WORLD MODELS

LLMs Are Already 'Old AI' — World Models Are Producing 3–5x Faster ROI

Carter made a provocative claim: large language models are already becoming legacy technology. Trained on pattern-matching and one task at a time, LLMs struggle with unpredictable real-world scenarios. Her exhibit: a Waymo autonomous vehicle she observed the previous day, stuck at an intersection because construction had blocked its expected route. It could not figure out how to turn right.

World models, by contrast, are trained on cause and effect. They predict what they haven't seen before and carry contextual understanding of the physical world — not just text. BMW already runs NVIDIA's world models across 30 factories, building full digital twins of every vehicle before physical production begins. Carter also highlighted Splexi, a Canadian startup that pays hobbyist drone pilots to capture aerial footage, which is then processed into spatial world model context.

TRAITS #6 & #7 — HUMANS

AI First, Human Always: The Other 85% Lives in You

"Only 15% of the world's knowledge is digitized. Every AI model in existence was trained on 15% of what humans know. The other 85% — your intuition, judgment, cultural knowledge, lived experience — that is inside you."

Carter's book title is not accidental. The closing argument of her session was that the next era of AI ROI belongs not to AI alone but to the combination of human domain expertise and AI capability. She presented a range of evidence.

In Australia, a man with no scientific background paid $3,000 to sequence his dog Rosie's tumor DNA, fed the data into ChatGPT and AlphaFold, identified the mutated protein, matched it to a drug, and had a working vaccine candidate within approximately one week. Regulatory approval took three months. After receiving the vaccine, Rosie's tumor was cut in half. Biochemists described the outcome as "gobsmacking." "Would a robot have loved that dog enough to do that?" Carter asked.

Engineers Are Not Being Replaced — Demand Is Rising

Carter challenged last year's prevailing SXSW narrative that AI would eliminate software engineers. Indeed data she shared shows developer job postings have grown sharply, not declined. Box CEO Aaron Levy explained the mechanism as the Jevons Paradox: when AI lowers the cost of building software, the demand for features explodes — and more engineers are needed to build and maintain what gets created.

"The most successful people with AI today are not young developers in hoodies. They are people over the age of 45 with real-world domain expertise." LinkedIn data cited by Carter shows job postings for storytellers have doubled since generative AI entered the mainstream — because narrative, context, and emotional resonance remain distinctly human contributions.

The Action Framework: People → Process → Platform

Carter closed with a framework she called the most common reversal error in enterprise AI: most companies start with Platform (technology selection), then Process, then People. Winners reverse it.

Implications for Global Content & Entertainment Tech

Carter's seven traits apply with particular force to the global content and entertainment technology sector. Her central argument — that domain expertise, not engineering capability, determines AI ROI — is especially relevant for content businesses whose competitive advantage lies in narrative craft, cultural insight, and IP depth.

The Storytown.ai model — domain experts vibe-coding AI into a production workflow without engineering staff — is immediately applicable to any content studio or media company evaluating AI integration. The governance warning is equally pressing: companies building toward global platform distribution need to establish agent compliance architecture well before scale, not after. Carter's prediction is that by 2027, AI governance will function as a de facto market entry requirement in major regulated territories.

■ Sources & References

![[SXSW2026] ‘수렴(Convergence)’의 시대, K-엔터테크를 덮칠 세 폭풍](https://cdn.media.bluedot.so/bluedot.kentertechhub/2026/03/pg648c_202603152304.png)

![[SXSW2026]에이미 웹 트렌드 리포트(Convergence

Outlook 2026)](https://cdn.media.bluedot.so/bluedot.kentertechhub/2026/03/kty3s8_202603152312.png)

![[보고서]전통 언론사의 크리에이터 전략 대전환](https://cdn.media.bluedot.so/bluedot.kentertechhub/2026/02/0nwc9z_202602100212.png)